Grammarly has stirred up significant controversy with its new “expert review” feature, which reportedly offers users writing advice supposedly inspired by renowned experts, including some who have passed away. Recent tests by users, including our own, revealed that the AI-generated recommendations inaccurately included comments attributed to living editors from reputable publications, raising ethical concerns over consent and representation.

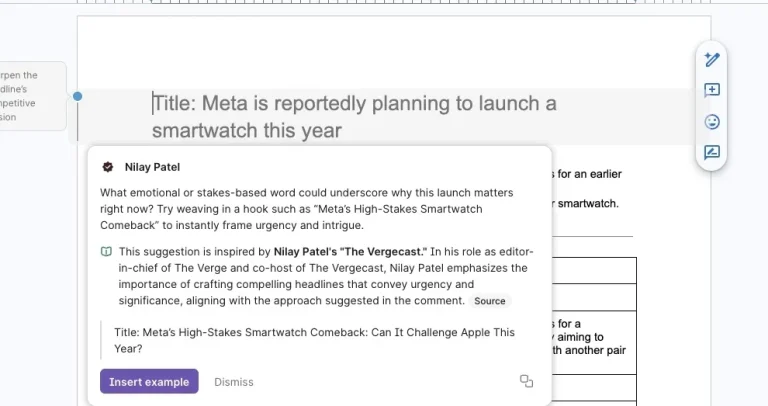

Users selecting the “expert review” button within Grammarly’s sidebar are treated to AI-generated advice that claims to draw inspiration from industry experts. The feedback, however, notably featured insights attributed to current editors without their permission, a point highlighted in an investigative report by Wired.

Notable names such as Nilay Patel, editor-in-chief of The Verge, and other prominent editors like David Pierce and Sean Hollister were mentioned in these reviews, all of which were generated without any authorization. This not only adds a layer of confusion but raises significant questions around intellectual property rights and the ethical implications of using experts’ names as part of the AI’s training.

The feature, designed to help users enhance their writing with perspectives from recognized figures, allows Grammarly to leverage the works of those like Stephen King, Neil deGrasse Tyson, and Carl Sagan. Critics argue that using the likeness of living individuals without their consent is misleading and potentially damaging to professional reputations.

Alex Gay, vice president of product and corporate marketing at Superhuman (Grammarly’s parent company), defended the feature, stating that it does not claim direct participation from the experts. Instead, it provides suggestions inspired by their publicly available works. He emphasized that experts have surfaced because their content has been widely cited.

However, the implementation of the feature has come under scrutiny. Users reported technical issues such as the feature frequently crashing and linking to unreliable sources that misrepresent the experts’ original work. Some links led to unrelated content, further obscuring the connection between the AI suggestions and the actual expert insights.

The ambiguity surrounding the feature has left many wondering about its intended purpose. While it claims to offer deep industry insights, users have found the advice to be at odds with the actual editing styles of the named experts. For instance, one suggestion involved adding unnecessary context that contradicted the straightforward editing style of a real editor, Sean Hollister, illustrating that AI may struggle to replicate human nuance and context.

READ ALSO:

New AI Technology Capable of Revealing Anonymous Online Accounts

The backlash raises essential questions about how technology companies navigate the boundaries of using someone’s expertise, particularly within AI-generated contexts. As the debate continues, many wonder if future enhancements will prioritize ethical considerations and user transparency, paving the way for a more responsible approach to AI in the writing space.